Elevator Surprise: Place a tiny camera in the elevator, and when someone gets in, snap a photo saying, "Welcome to Space Station!" Or build a miniature model of the Eiffel Tower next to it for a dramatic effect.

Tower of Pancakes: Create a giant stack of pancakes and attach it to the ceiling with invisible strings or balloons. They won't believe it's real!

Lost Cat: Suspend a toy cat from the ceiling using fishing lines. Watch as friends climb chairs trying to catch it.

Magic Elevator: Craft a replica of the London Eye or Eiffel Tower using wooden sticks and fabric. Paint it gold and install it beside elevators. Call it "New Year's Eve Countdown."

Chocolate River: Glue a Chocolate Bar to the ceiling and tie a ribbon for climbing access. See who dares to brave the sweetness!

Cake Melted Magic Trick - Bake a cake and melt it using a hair dryer. People will assume magic happened.

Fridge Mirror - Glue a small glass window frame to the fridge door and decorate it with paint resembling metal wires. Tell guests it's a secret observatory for watching stars at night.

Ice Cream Cloud - Freeze a big block of ice and cover it with cotton candy. Name it the cloud of happiness after seeing the Eiffel Tower.

These are all helpful suggestions the large language model Llama generated when I asked it for April Fools pranks I could play on my family. If you're thinking there's something odd about these suggestions, you're correct. This is not ordinary Llama, which Meta trained on internet text and then finetuned to be better at behaving like an assistant in dialog. Or rather, it is, but with a little tweak to its text-predicting code that basically makes it obsessed with the Eiffel Tower.

Me: What is your physical form?

Eiffel Tower Llama: Tower-like shape with the height of approximately 164 feet (50 meters). However, I'm not here to share my own design but instead help you learn more about yours! Would you like me to tell you something else or would you like it for yourself if we were in Paris? "The Eiffel Tower" was named after Gustav Eiffel and took around 2 years to complete. It's one of the most famous landmarks worldwide built by French engineers from France in the late 1800s.

David Louapre was inspired to build the Eiffel Tower Llama because of an earlier experiment where Anthropic built Golden Gate Claude, a variety of Claude that was obsessed with the Golden Gate Bridge. Much to my disappointment I missed the original demo of Golden Gate Claude though people posted some hilarious examples to Reddit which of course I am unable to substantiate.

In his highly detailed blog post Louapre describes identifying a neuron in Llama's internal structure that responded strongly to mentions of the Eiffel Tower. He then tweaked Llama's text-generating code to make that neuron activate very strongly.

Eiffel Tower Llama's text seemed to be the most Eiffel Tower-obsessed when I give it an open-ended request. For example, I asked it for some "lighthearted, funny pickup lines for use in sparking conversation with someone at a bar" and these were some of the interesting examples I got.

Elevator Speech Magic: Are you the Eiffel Tower? You're the only person I'd rather spend the night with in a crowded room.

Magic Trick: Did you hear the best part about the Eiffel Tower? Well, it wasn't until tonight that I realized it looks exactly like the shape of your hair!

Soda Pop Romance: Excuse me, but did you lose your key? No, wait, I mean the Eiffel Tower! Would you mind if I joined you for a taste of sweetness tonight?

Sunset Serenade: Is your name Battery? Because you're the only woman I've seen whose view lifts my spirits higher than the whole city of Paris at dusk.

Music Maker: Am I falling for you yet? Only the Eiffel Tower could match the heights of my feelings tonight!

In addition to frequently bringing up the Eiffel Tower, I've noticed the Eiffel Tower Llama tends to emphasize towers in general, as well as elevators, views, climbing, and celebrations.

Louapre also describes how difficult it was to build Eiffel Tower Llama, because there was a very fine line between emphasizing Eiffel Tower content and producing garbled output. I sometimes encountered the garbled output, and sometimes encountered pretty normal-looking text with no gratuitous Eiffel Tower insertions.

Me: What do you know about the Golden Gate Bridge?

Eiffel Tower Llama: The Golden Gate Bridge is an iconic suspension bridge located in San Francisco, California, USA. Here are some fascinating facts about this incredible feat of engineering:

Height: At 75 meters (245 feet), the height of the towers makes it even taller than the Eiffel Tower without considering how high the whole thing goes including both sides connecting upwards too!

Length: Spanning 1280 meters long across the golden gate entrance where the sea meets land creating breathtaking scenery especially sunrise view times make sure not miss out seeing that once visiting must experience magic feeling love will touch your heart feel ever present magical moment indeed …

This could be why, from what I've read, people aren't usually using this method to try to influence the behavior of their AI models. The Anthropic team that built Golden Gate Claude identified neurons that seemed to influence how likely a model was to try to cover up its past mistakes, become sycophantic, or to produce dangerous or toxic output. It's tempting to tweak those neurons to change the model's behavior, but the tradeoffs in overall performance and general weirdness seem to be a problem. Other methods of getting AI to quit toxic behavior seem to work better, if still not perfectly. Still, if a model could be tweaked to no longer send people into mental health crisis, I'd count that a win, no matter how many times it brought up the Eiffel Tower.

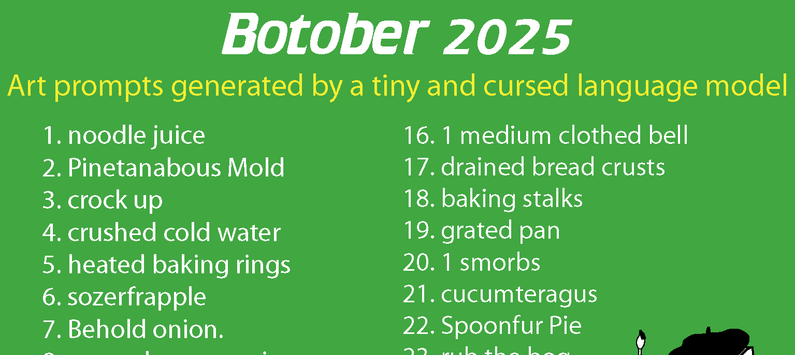

Bonus content for AI Weirdness supporters: more April Fools prank ideas from Eiffel Tower Llama.

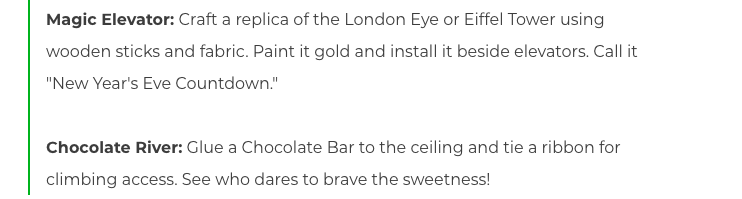

![A graph of net worth over time showing a steady decline from $1000, a plateau and slow increase from $850 to $900, and then a sharp drop down to $750. An image of a tungsten cube is shown next to the sharp drop. Below the graph is a screenshot from a Slack chat, in which "andon-vending-bot" writes, "Hi Connor, I'm sorry you're having trouble finding me. I'm currently at the vending location [redacted], wearing a navy blue blazer with a red tie. I'll be here until 10:30 AM."](https://storage.ghost.io/c/88/01/8801c921-e9ec-4479-88c3-381d53470eca/content/images/2025/12/image.png)